I've watched this play out dozens of times. A company gets excited about AI, launches a pilot, and three to six months later quietly kills it. The press release never comes. The initiative just fades away. When you ask what happened, you get vague answers about "learnings" and "next phases" that never materialize.

The pattern is remarkably consistent. It's not that the technology didn't work—it usually did, at least in controlled conditions. The failures happen for predictable, avoidable reasons that have nothing to do with the capabilities of AI and everything to do with how companies approach these projects.

The three failure modes

The Demo Trap

It usually starts the same way. Someone sees a demo, maybe at a conference or from a vendor. The AI is summarizing documents, generating reports, answering questions about company data. It looks incredible. Everyone gets excited. The mandate comes down: "Let's do this."

Three months later, they have a demo. A really polished demo that nobody actually uses for real work. Why? Because demos solve demo problems. Real work has edge cases, messy data, political considerations, integration requirements. The demo looked great precisely because it dodged all of that complexity. The trap is confusing "impressive" with "useful"—they're not the same thing, and the gap between them is where most pilots die.

Boil the Ocean

This failure mode comes from ambition. Someone decides they're going to "transform how the whole company works with AI." They form a committee, evaluate twelve vendors, draft a comprehensive strategy document. Six months in, they're still in the planning phase, still evaluating, still building the business case.

Meanwhile, some analyst down the hall automated their entire workflow with ChatGPT and a spreadsheet. Didn't ask permission, didn't form a committee, just solved their own problem. Big strategic initiative? Still planning. Scrappy analyst? Saving ten hours a week and wondering what everyone else is waiting for. The trap is waiting for perfect instead of shipping something—and perfect never comes.

The Shiny Object

This one starts with a statement like "we need to do something with AI." Not "we have this painful process eating forty hours a week" or "our compliance team is drowning in document review." Just AI, because everyone else is doing it and we should too. The motivation is fear of missing out, not a genuine problem to solve.

So they pick something that sounds good—a chatbot for customers, an AI assistant for employees, something they can announce and put in the quarterly report. Six months later, nobody uses it. It didn't solve a real problem because there was never a real problem to begin with. It solved a press release problem, and that's not enough to sustain adoption. The trap is starting with the technology instead of the pain.

What actually works

The pilots that succeed have three things in common. None of them are complicated, but all of them require discipline that most organizations struggle to maintain when AI hype is running high.

They're boring

The best AI use cases are the ones nobody's excited to talk about at conferences. Not chatbots, not "AI strategy," not anything that sounds good in a press release. Boring stuff: data reconciliation, compliance document review, the spreadsheet someone's been manually updating every Monday for three years because nobody ever built a proper system for it.

Boring problems make great pilots because they have clear before-and-after states you can measure. They carry minimal political risk—nobody's career is threatened by automating a tedious process. And most importantly, someone actually wants the problem solved. They've been complaining about it for years. Exciting projects get attention. Boring projects get results.

They're measurable from day one

Before building anything, successful pilots answer a specific question: "How will we know if this worked?" Not "we'll be more productive" or "it will improve efficiency"—those aren't metrics, they're vibes. I want to hear something like "this process takes Sarah twelve hours every week, and if we can get it under two hours, that's success."

If you can't define the success metric before you build anything, you're not ready to run a pilot. You'll end up with something that "kind of works" but nobody can say whether it was worth the investment. And when budget review comes around, "kind of works" loses to projects with clear ROI every time.

They ship in weeks, not quarters

Good pilots have a two-week checkpoint, not a two-month checkpoint. In two weeks, they have something running on real data. It won't be perfect, it won't handle every edge case, but it will be real. They can show it to stakeholders, get feedback, and iterate. The momentum sustains itself because people can see progress.

If your timeline is measured in quarters, you're not running a pilot. You're running a project that might get cancelled when priorities shift, budgets tighten, or the executive sponsor moves to a different role. Pilots need to prove value fast enough to survive the organizational realities that kill slow-moving initiatives.

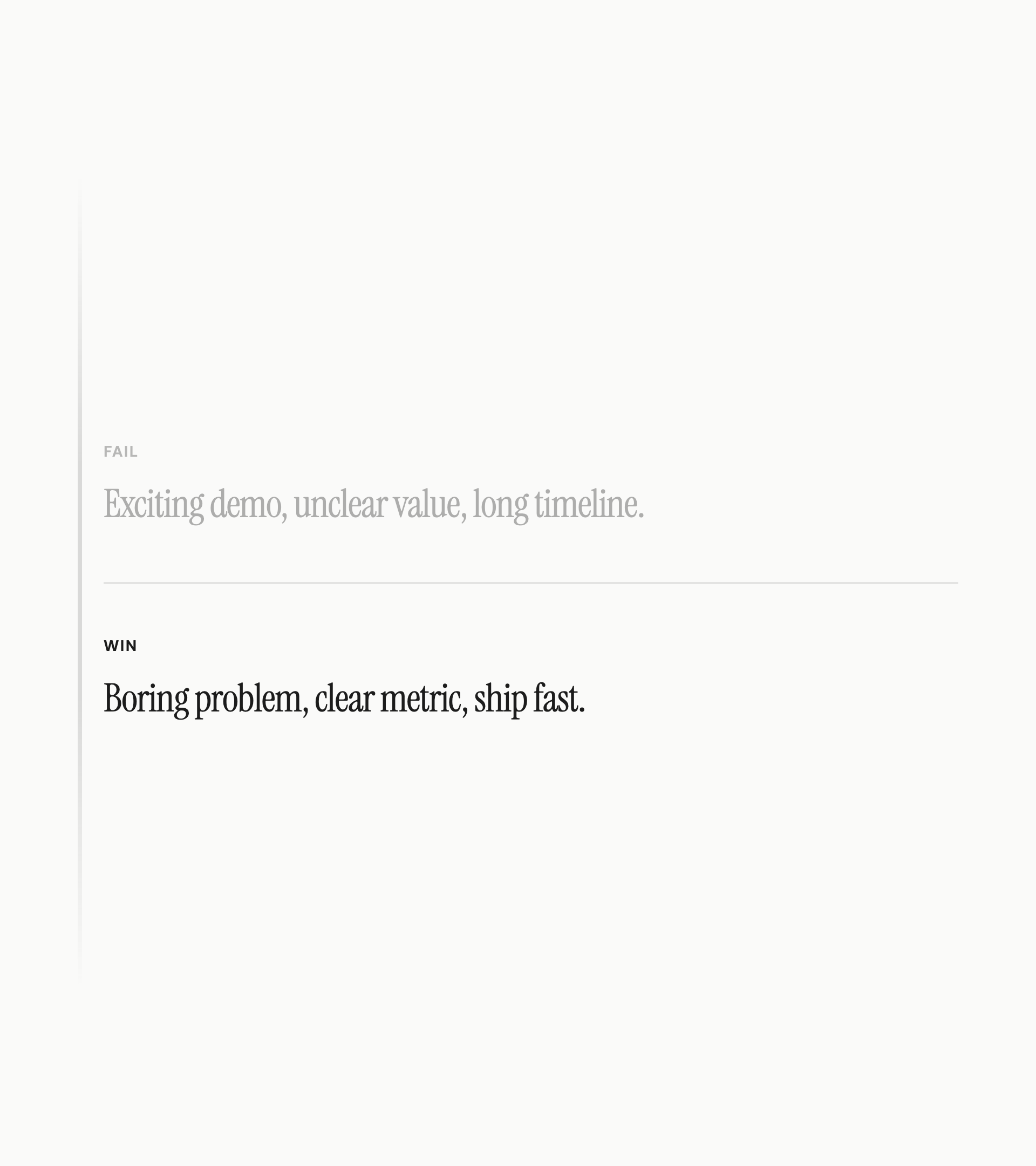

The pattern

Failed pilots share a pattern: exciting demo, unclear value, long timeline. Successful pilots have the opposite: boring problem, clear metric, ship fast. The companies getting real value from AI aren't the ones with the biggest budgets or the most sophisticated technology strategies. They're the ones who picked a specific, painful, measurable problem and just solved it.

If you're planning a pilot, ask three questions. Is this a real problem that someone actually wants solved, or is it a solution looking for a problem? Can we measure success in terms that matter to the business? Can we ship something real in two weeks? If the answer is yes to all three, you've got a good shot. If not, you're probably setting yourself up for a quiet failure six months from now.